What is AI Mapper

AI Mapper is an accessible navigation app designed to support people with disabilities when using public transport in London. It focuses on the needs of blind and partially sighted (BPS) individuals as well as wheelchair users, addressing the challenges of navigating complex transit environments. The app combines multimodal features—including vision-based assistance, intelligent journey planning, and real-time wayfinding—to guide users throughout their journey. By integrating both planning and live navigation in one platform, AI Mapper enables more independent, confident, and inclusive travel.

AI Mapper is an accessible navigation app designed to support people with disabilities when using public transport in London. It focuses on the needs of blind and partially sighted (BPS) individuals as well as wheelchair users, addressing the challenges of navigating complex transit environments. The app combines multimodal features—including vision-based assistance, intelligent journey planning, and real-time wayfinding—to guide users throughout their journey. By integrating both planning and live navigation in one platform, AI Mapper enables more independent, confident, and inclusive travel.

Why Smarter Travel Support Is Needed

Navigating transport systems can be challenging for adults who experience the world differently—especially when environments change quickly. Traditional navigation aids struggle in dynamic real‑life settings, making even simple journeys more complicated and sometimes unsafe. When surroundings shift or become cluttered with visual information, confidence drops and travel becomes stressful. GPS inaccuracies—often several meters off in busy urban areas—add to the difficulty, making it hard to locate intersections, station entrances, or the correct bus stop. These real‑world barriers highlight the need for technology that can offer clearer, safer, and more adaptive guidance throughout the transport experience.

Difficulty Accessing Real-Time Information

Users find it difficult to understand timetables service updates, service updates, and route information quickly.

Difficulty Understanding Navigation

Complex layout, unclear directions, and poor structure make routes hard to understand.

Limited Accessibility Support

Limited audio, assitive support, or alternative formats make information hard to access.

Fragmented Digital Tools

Users must switch between multiple apps for planning, navigation, and accessibility information.

Barriers to Finding Real Help

Assistance, guidance, or emergency information is unclear or difficult to locate.

Challenges Using Technology

Existing digital tools are difficult to use, overwhelming, or not intuitive.

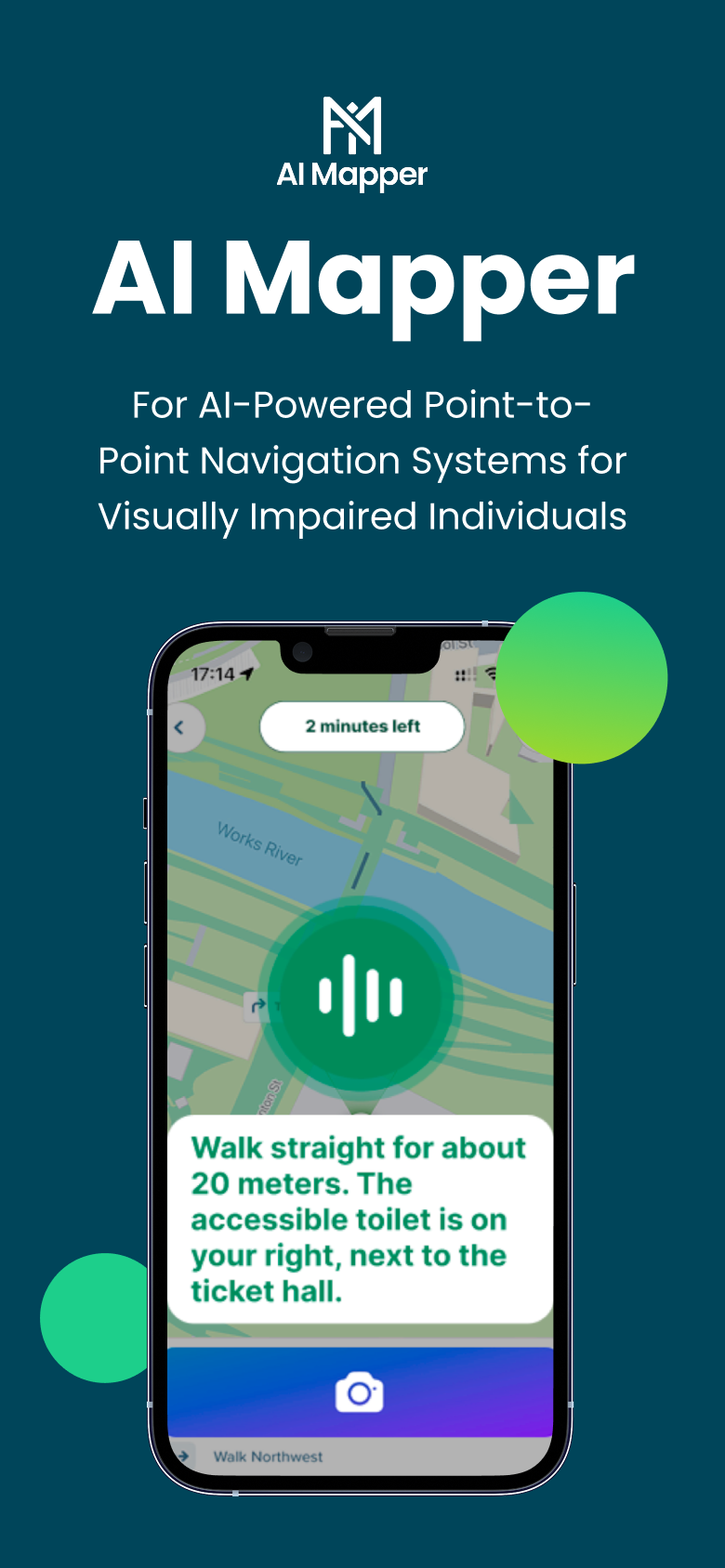

How it works

Computer vision processes this input to detect obstacles and landmarks.

LLM/VLM models convert complex navigation data into short, clear instructions.

The device captures visual and sensor input (camera, LiDAR).

Mapping APIs generate routing and real-time transit information.

The system outputs audio or haptic guidance directly to the user.

How it works

Computer vision processes this input to detect obstacles and landmarks.

LLM/VLM models convert complex navigation data into short, clear instructions.

The device captures visual and sensor input (camera, LiDAR).

Mapping APIs generate routing and real-time transit information.

The system outputs audio or haptic guidance directly to the user.

The device captures visual and sensor input (camera, LiDAR).

Computer vision processes this input to detect obstacles and landmarks.

Mapping APIs generate routing and real-time transit information.

LLM/VLM models convert complex navigation data into short, clear instructions.

The system outputs audio or haptic guidance directly to the user.

How we help our target users

Journey Planning

Users can plan accessible routes using public transport, with consideration of preferences, accessibility needs, weather, and real-time data such as crowding.

Real-time Wayfinding

The app provides step-by-step guidance during travel, helping users navigate stations, platforms, and interchanges.

Conversational Interaction

Users can interact with the app through natural language (voice or text), reducing complexity and making the system easier to use.

Visual Assistance

Using the phone camera, the app interprets surroundings—such as signage, exits, obstacles, and accessibility features—and translates them into actionable guidance.

Accessibility Support

The app helps identify step-free routes, elevators, and less crowded paths, particularly benefiting wheelchair users and those who struggle in busy environments

Technology Functionality

AI Mapper combines mobile sensors, computer vision, and advanced language models to deliver real‑time, context‑aware navigation that adapts to complex transport environments. It uses smartphone hardware, mapping APIs, and multimodal AI to interpret surroundings, detect obstacles, understand spatial layouts, and convert this information into clear, concise travel guidance.

Technology Functionality

AI Mapper combines mobile sensors, computer vision, and advanced language models to deliver real‑time, context‑aware navigation that adapts to complex transport environments. It uses smartphone hardware, mapping APIs, and multimodal AI to interpret surroundings, detect obstacles, understand spatial layouts, and convert this information into clear, concise travel guidance.

Name

Role

Name

Role

Name

Role

Name

Role

Name

Role

Name

Role

Copyright © 2026 AIMapper. All rights reserved.